J. Cent. South Univ. Technol. (2009) 16: 0640-0646

DOI: 10.1007/s11771-009-0106-3

Automated retinal blood vessels segmentation based on

simplified PCNN and fast 2D-Otsu algorithm

YAO Chang(姚 畅), CHEN Hou-jin(陈后金)

(School of Electronics and Information Engineering, Beijing Jiaotong University, Beijing 100044, China)

Abstract: According to the characteristics of dynamic firing in pulse coupled neural network (PCNN) and regional configuration in retinal blood vessel network, a new method combined with simplified PCNN and fast 2D-Otsu algorithm was proposed for automated retinal blood vessels segmentation. Firstly, 2D Gaussian matched filter was used to enhance the retinal images and simplified PCNN was employed to segment the blood vessels by firing neighborhood neurons. Then, fast 2D-Otsu algorithm was introduced to search the best segmentation results and iteration times with less computation time. Finally, the whole vessel network was obtained via analyzing the regional connectivity. Experiments implemented on the public Hoover database indicate that this new method gets a 0.803 5 true positive rate and a 0.028 0 false positive rate on an average. According to the test results, compared with Hoover algorithm and method of PCNN and 1D-Otsu, the proposed method shows much better performance.

Key words: blood vessel segmentation; pulse coupled neural network (PCNN); Otsu; neuron

1 Introduction

Blood vessels in retinal images are the only tiny vessels that can be directly observed without wound, and have many observable features, including diameter, color, curvature, and so on. Some diseases such as diabetes, hypertension, and arteriosclerosis can make these features changed. Therefore, detecting and extracting the vessels in retinal images can help the eye care specialists to diagnose, treatment evaluate, and clinical research [1].

Previous methods on retinal vessel segmentation could be classified into two categories: window-based [2-3] and tracking-based [4-5]. Window-based methods explore the properties of a pixel’s surrounding window and emphasize the pixels whose surrounding window matches a given model. Tracking-based methods utilize a vessel profile model, and start from some initial points, and then trace incrementally following a path that matches the profile model best. HOOVER et al [6] proposed an improvement method based on piecewise threshold probing. Actually, this technique could be regarded as an integration of window-based and tracking-based techniques. Because of the width of retinal vessels varying from large to small, and the local contrast of vessels being unstable especially in unhealthy retinal images, there is still large room for the improvement of retinal vessel segmentation methods.

Pulse coupled neural network (PCNN) [7] is different from traditional artificial neural networks [8], and the models of which have biological background are based on the experimental observations of synchronous pulse bursts in cat visual cortex. In PCNN model, neurons with similar pulse can be fired at the same time, which can counterbalance the spatial discontinuity and preserve better region information of images [9]. These are beneficial to image segmentation. However, this model cannot show the best object estimate of segmentation results in the process of iteration and cannot control the iteration times, which influences the result of segmentation. An automated image segmentation method using PCNN and image’s entropy was proposed in Ref.[10] but with poor performance for little object segmentation, and in Ref.[11], an image segmentation method based on PCNN and 1D-Otsu algorithm was proposed.

We proposed a fast 2D-Otsu algorithm for image segmentation by using distributed genetic algorithm based on migration strategy [12], and the simulation results indicated that the fast 2D-Otsu method not only performed as conventional 2D-Otsu for low signal noise ratio (SNR) images, but also ran more quickly than the latter. So in this work, considering the low contrast of retinal images, a new method based on simplified PCNN and fast 2D-Otsu algorithm was proposed for automated retinal blood vessels segmentation. Simplified PCNN was employed to segment the blood vessels by firing neighborhood neurons and fast 2D-Otsu algorithm was

used to search the best segmentation results with less computation time.

2 Preprocessing for retinal images

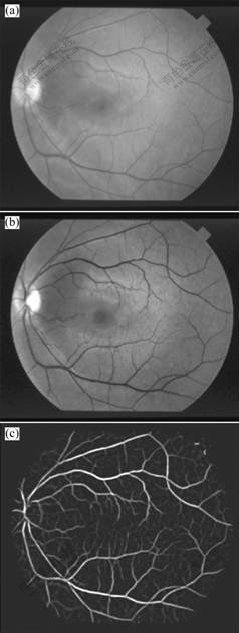

Original retinal images were from public Hoover database [13] and all of them were standard RGB images. When the RGB components of colored image were visualized separately, the green channel showed the best vessel/background contrast, whereas the red and blue channels showed lower contrast, and were very noisy. Therefore, the green channel was selected. Fig.1(a) shows an original image, and Fig.1(b) shows the green channel image.

Fig.1 Preprocessing of retinal image: (a) Original retinal image; (b) Green channel image; (c) MFR image

In order to improve the contrast of vessel and background, 12 Gaussian matched filter templates (16×16) were used to enhance the vessel pixels along all possible directions by convolving with the green channel image, and the maximum convoluting value was set as the intensity of each enhanced pixel [2]. Fig.1(c) shows a matched filter response (MFR) image.

3 Retinal blood vessels segmentation

3.1 Simplified PCNN model

According to the phenomena of synchronous pulse bursts in cat visual cortex, ECKHORN et al [7] brought forward a linking field network. Then some modifications were introduced into the linking field network that became PCNN. In practice, there are many disadvantages for directly applying PCNN to image processing, such as excessive parameters, difficult determination, and long run time. For purpose of simplifying PCNN with the same performance, a simplified PCNN model was introduced for retinal blood vessels segmentation, which made PCNN easy to analyze, control and implement by hardware. Every neuron of simplified PCNN consists of three parts: the receptive field, the modulation field, and the pulse generator. The neuron structure is shown in Fig.2, and the equations are described as follows:

Fij[n]=Sij (1)

(2)

(2)

Uij[n]=Fij[n](1+βLij[n]) (3)

(4)

(4)

(5)

(5)

where Sij is the external stimulation signal (the intensity of pixel), Fij is the feedback input, here with no feedback input, but with external stimulation signal Sij, Lij is the linking input, Uij is the internal activity, θij is the dynamic threshold, Yij is the pulse output, β is the

Fig.2 Neuron structure of simplified PCNN

linking constant, W is the linking weight matrix, VL is the linking amplitude constant, αθ is the decay constant of the dynamic threshold, Vθ is the threshold amplitude constant and n is the iterative times. In image segmentation, the simplified PCNN is a single layer 2D array of laterally linked neurons. The number of neurons in the network is equal to the number of pixels in the input image. There exists a one-to-one correspondence between the image pixels and network neurons.

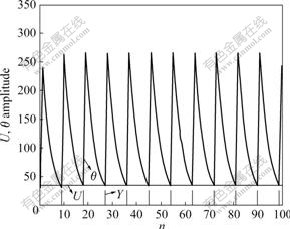

Considering a neuron unlinked with other neurons, namely β=0, the unattached neuron under the stimulation of external input signal generated pulses with a certain frequency by firing naturally. Fig.3 shows the characteristic curve of an unattached neuron. It can be seen that external input pixels are converted into corresponding frequencies, and the brighter pixels fire earlier.

Fig.3 Characteristic curve of single neuron

When neurons were coupled with each other, neuron Nij fired at times n, which would make the internal activity of surrounding neuron Npq become Spq(1+βLpq). If Spq(1+βLpq)>θpq[n], then Npq fired early at time n and Npq was named captured neuron. Fig.4 shows dynamic behavior of a captured neuron and an un-captured neuron.

3.2 Fast 2D-Otsu algorithm

The Otsu algorithm was proposed by OTSU in 1979 [14], namely the maximum between-class variance method. It was a widely used tool in image segmentation for extracting the object regions from their background, but failed for the image of low SNR since it depended solely on the 1D gray level histogram of the image. However, 2D-Otsu algorithm for image segmentation could perform well even on the image with low SNR and low contrast because of utilizing the gray level information and space information of pixel. The expressions are described as follows:

Fig.4 Dynamic behavior of captured neuron (a) and un-captured neuron (b)

(6)

(6)

where ω0 and ω1 are the probabilities of object and background respectively,

(7a)

(7a)

(7b)

(7b)

,

,  and

and  are the mean level vectors, and

are the mean level vectors, and

(8a)

(8a)

(8b)

(8b)

(8c)

(8c)

pij is the joint probability, pij=fij/N, N is the total number of pixels, fij is the frequency of a pair (i, j), in which i is the gray level of pixel and j is the integral number of neighborhood average gray level. When σB(s, t) gets the maximum value, the algorithm will achieve the best segmentation result.

Although 2D-Otsu algorithm can get preferable segmentation result, it gives rise to the exponential increment of computation time. The computation complexity is bounded by O(M 4), where M is the number of gray levels. In Ref.[12], we proposed a fast 2D-Otsu algorithm for image segmentation by using distributed genetic algorithm based on migration strategy, and the simulation results indicated that the proposed method not only performed as conventional 2D-Otsu, but also ran more quickly than the latter. So in this work, considering the low contrast of retinal images, the fast 2D-Otsu algorithm in Ref.[12] was taken to evaluate the segmentation results and control the iteration times of simplified PCNN.

3.3 Segmentation using simplified PCNN and fast 2D-Otsu algorithm

The retinal MFR images preprocessed were directly used for blood vessels segmentation. Simplified PCNN firstly made the MFR image pixels continually fire with the dynamic threshold decaying, then fast 2D-Otsu algorithm computed the between-class variances from the iterated images fired by simplified PCNN, and found out the image with the maximum between-class variance as the best segmentation result.

When simplified PCNN was used in retinal blood vessels segmentation, it was a single layer 2D array of laterally linked neurons and all the neurons were identical. The number of neurons in the network was equal to the number of pixels in the retinal MFR image. One-to-one correspondence existed between image pixels and neurons. Every neuron in simplified PCNN had the same connection mode. Each pixel was connected to a unique neuron and each neuron was connected with the surrounding eight-neighbor field neurons. So when a neuron was fired, it would activate the eight-neighbor field neurons and make their correspondence pixels’ intensity increase.

When fast 2D-Otsu algorithm was used to control the iteration times and estimate the segmentation results, the iterated image for computing the between-class variances was the image activated by neighbor neurons, but not the MFR image.

According to the above, the steps of retinal blood vessels segmentation using simplified PCNN and fast 2D-Otsu algorithm are shown as follows.

Step 1: Initialize the parameters of simplified PCNN.

Step 2: Implement iteration one times, and for every iteration:

(1) Compute Eqns.(1)-(5) in turn to get the firing situation of the MFR image.

(2) Compute the between-class variances of the fired image using fast 2D-Otsu algorithm and find out the current maximum value.

Step 3: Compare the current maximum value with last time’s. If the current maximum value large than the last time’s, then continue to perform Step 2; or else, end the iteration and show the fired image of the last time’s maximum value as the best segmentation result.

4 Post processing

Due to the low intensity contrast of blood vessel and background, and the enhancement of pathological regions by mistake, there was still some noise in segmentation result. It was necessary to implement post processing on segmentation results. According to the connectivity of retinal vasculature, an area filter was designed to remove the isolated pixels. It used an eight-connected operator to identify separate connected regions and measure the area of each connected region. If the area was larger than a threshold, it was the blood vessel. Otherwise, it was the noisy pixel. In this work, the threshold was set to be 50. Image by post processing was the final segmentation result. In addition, the skeleton of blood vessels by post processing could be obtained via thinning operation according to the need of subsequent processing.

5 Experimental results

In this experiment, the Hoover database [13] consisted of 10 normal images and 10 abnormal images. The database also provided the hand-labeled images by two experts as the ground truth for vessel segmentation. The first expert’s hand-labeled images were taken as the ground truth for evaluating the algorithm’s performance. All neurons of improved PCNN were fired only once, and the parameters of the improved PCNN were given by experience as:

αθ=0.2, VL=1, Vθ=10 000, β=0.1, W .

.

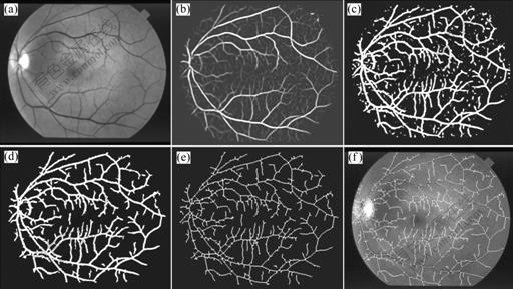

Fig.5 shows an image’s schematic of processing by proposed method, of which Fig.5(a) shows the green channel of original image, Fig.5(b) shows the MFR image, Fig.5(c) shows the segmentation result by simplified PCNN and fast 2D-Otsu algorithm, Fig.5(d) shows the final extraction result by post processing, Fig.5(e) shows the skeleton of final extraction result and

Fig.5 Schematic of processing by proposed method: (a) Green channel image; (b) MFR image; (c) Segmentation result; (d) Final extraction result; (e) Skeleton of blood vessels; (f) Overlapping image

Fig.5(f) shows the overlapping result of skeleton and green channel image.

In order to evaluate the performance of the proposed method, final segmentation results were compared with those of other algorithms. Fig.6 shows the comparison of final segmentation results. The first row shows the original retinal images in Hoover database, the second row shows the hand-labeled images by the first expert as ground truth, the third row shows the segmentation results by Hoover algorithm, the forth row shows the segmentation results by PCNN and image’s entropy, the fifth row shows the segmentation results by PCNN and 1D-Otsu, and the last row reveals the segmentation results of our method. The first column is the normal image numbered Im0082, the second and third columns are the low contrast images numbered Im0077 and Im0255, respectively, and the forth column is the pathological image numbered Im0005.

From Fig.6, it can be seen that results by PCNN and image’s entropy are badly over-segmented and much noise is segmented as target, so this method is not fit for retinal blood vessels segmentation. Reversely, the results by our proposed method are superior, which gets more branch vessels and has better connectivity than Hoover algorithm and PCNN and 1D-Otsu on the small vessels segmentation. Meanwhile, we can see that the proposed method is robust for normal and low contrast images. But for pathological images, the segmentation results contain some pathological regions, because the pathological regions are simultaneously enhanced by matched filter.

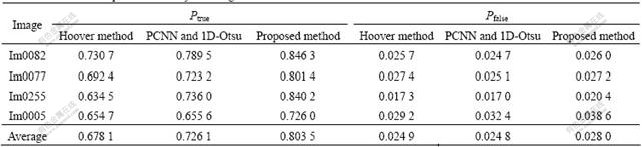

For further verifying the availability of the proposed method, we used the true positive rate Ptrue and the false positive rate Pfalse for evaluation [15]. The definitions of the two indices are described as follows:

(9)

(9)

where Nvp is the sum of the pixels that are marked as vessel in a ground truth image, Nuvp is the sum of the pixels that are marked as non vessel in a ground truth image, TN is the sum of the pixels that are segmented as vessel truly, and FN is the sum of the pixels that are segmented as vessel falsely. The first expert’s hand-labeled images are taken for ground truth. The larger the true positive rate, the more the true vessels segmented; and the less the false positive rate, the less the false vessels segmented. Table 1 lists the computational results of the proposed method and other methods.

As the method based on PCNN and image’s entropy failed to segment blood vessels, it was not considered for performance evaluation. From Table 1 it can be seen that, for normal and low contrast retinal images, the proposed method gets similar performance to the false positive rates of other algorithms, but of which the true positive rate is larger than that of PCNN and 1D-Otsu method and Hoover method. It is further proved that our method is robust for normal and low contrast images by quantitative analysis. However, for pathological images, the proposed method attains similar true positive rate with Hoover method, but with larger false positive rate. As for average performance, the true positive rate of proposed method is 0.803 5, larger than Hoover method’s 0.678 1, and the false positive rate is 0.028 0, similar to Hoover method’s (0.024 9). This

Fig.6 Comparison of final segmentation results by four algorithms: The first row shows original retinal images; the second row shows hand-labeled images; the third row shows segmentation results by Hoover algorithm; the forth row shows segmentation results by PCNN and image’s entropy; the fifth row shows segmentation results by PCNN and 1D-Otsu; the last row shows segmentation results of our method

Table 1 True and false positive rates by three algorithms

means that our method can get more true vessels than other methods with the same false positive rate.

The experiments were implemented by using MATLAB 6.5 on a computer with dual-core CPU 1.60 GHz and RAM 512 MB, and the average time of our method for each retinal image with size 607×700 pixel was about 1 min. Comparing with the experts’ 2-4 h for hand-label and Hoover algorithm’s 6-8 min, the proposed algorithm is more efficient and can satisfy clinical application.

6 Conclusions

(1) Extracting blood vessels from retina images is a challenging problem and the result of extracted blood vessels will directly affect the following research and development. In this work, a new method combined simplified PCNN with fast 2D-Otsu algorithm was proposed for retinal blood vessels segmentation. The retinal MFR image preprocessed was directly fired by simplified PCNN and fast 2D-Otsu algorithm was used to control the iteration times and find out the best segmentation results. Experimental results show that the proposed method outperforms other algorithms on small vessels segmentation, especially for low contrast retinal images.

(2) Although the proposed method shows good performance for normal and low contrast retinal images, there is still room for further improvement on pathological retinal images. How to remove the pathological regions by combination with their pathological features and segment the whole blood vessel network is our subsequent work.

References

[1] YAO Chang, CHEN Hou-jin, LI Ju-peng. Segmentation of retinal blood vessels based on transition region extraction [J]. Acta Electronica Sinica, 2008, 36(5): 974-978. (in Chinese)

[2] CHAUDHURI S, CHATTERJEE S, KATZ N, NELSON M, GOLDBAUM M. Detection of blood vessels in retinal images using two-dimensional matched filters [J]. IEEE Transactions on Medical Imaging, 1989, 8(3): 263-269.

[3] JIANG X Y, MOJON D. Adaptive local thresholding by verification-based multithreshold probing with application to vessel detection in retinal images [J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2003, 25(1): 131-137.

[4] TOLIAS Y A, PANAS S M. A fuzzy vessel tracking algorithm for retinal images based oil fuzzy clustering [J]. IEEE Transactions on Medical Imaging, 1998, 17(2): 263-273.

[5] TANG Min, WANG Hui-nan. Automatic segmentation algorithm of color retinal vascular images [J]. Chinese Journal of Scientific Instrument, 2007, 28(7): 1281-1285. (in Chinese)

[6] HOOVER A, KOUZNETSOVA V, GOLDBAUM M. Locating blood vessels in retinal images by piecewise threshold probing of a matched filter response [J]. IEEE Transactions on Medical Imaging, 2000, 19(3): 203-210.

[7] ECKHORN R, REITBOECK H J, ARNDT M, DICKE P. Feature linking via synchronization among distributed assemblies: Simulations of results from cat visual cortex [J]. Neural Computation, 1990, 2(3): 293-307.

[8] ZHANG Hong-liang, ZOU Zhong, LI Jie, CHEN Xiang-tao. Flame image recognition of alumina rotary kiln by artificial neural network and support vector machine methods [J]. Journal of Central South University of Technology, 2008, 15(1): 39-43.

[9] JOHNSON J L, PADGETT M L. PCNN models and applications [J]. IEEE Transactions on Neural Networks, 1999, 10(3): 480-498.

[10] MA Yi-de, DAI Ruo-lan, LI Lian. Automated image segmentation using pulse coupled neural networks and image’s entropy [J]. Journal on Communication, 2002, 23(1): 46-51. (in Chinese)

[11] LI Guo-you, LI Hui-guang, WU Ti-hua. Enhancement of image based on Otsu and modified PCNN [J]. Journal of System Simulation, 2005, 17(6): 1370-1372. (in Chinese)

[12] YAO Chang, CHEN Hou-jin, YU Jiang-bo, LI Ju-peng. Application of distributed genetic algorithm based on migration strategy in image segmentation [C]// The 3rd International Conference on Natural Computation. Haikou, 2007: 218-222.

[13] HOOVER A. Structured analysis of the retina [EB/OL]. [2000-11]. http://www.ces.clemson.edu/~ahoover/stare/.

[14] OTSU N. A threshold selection method from gray level histograms [J]. IEEE Transactions on Systems, Man and Cybernetics, 1979, 9(1): 62-66.

[15] HUANG Shu-ying, ZHANG Er-hu. A method for segmentation of retinal image vessels [C]// Proceedings of the 6th World Congress on Intelligent Control and Automation. Dalian, 2006: 9673-9676.

(Edited by YANG You-ping)

Foundation item: Project (60872081) supported by the National Natural Science Foundation of China; Project (50051) supported by the Program for New Century Excellent Talents in University; Project (4092034) supported by the Natural Science Foundation of Beijing

Received date: 2008-09-22; Accepted date: 2008-11-15

Corresponding author: YAO Chang, Doctoral candidate; Tel: +86-13810450968; E-mail: 06111029@bjtu.edu.cn