J. Cent. South Univ. Technol. (2011) 18: 2045-2049

DOI: 10.1007/s11771-011-0940-y

Quantitative comparison of similarity measure and entropy for fuzzy sets

JUNG Dong-yean1, CHOI Jung-Wook2, PARK Wook-Je2, LEE Sang-Hyuk3

1. Daeho Tech, Changwon, Gyeongnam, 641-773, Korea;

2. Institute for Information and Electronics Research, Inha University, 253 Yonghyun-dong,Nam-Gu, Incheon, 402-751, Korea;

3. Department of Electrical and Electronic Engineering, Xi’an Jiaotong-Liverpool University, Suzhou 215123, China

? Central South University Press and Springer-Verlag Berlin Heidelberg 2011

Abstract: Comparison and data analysis with the similarity measures and entropy for fuzzy sets were carried out. The distance proportional value between the fuzzy set and the corresponding crisp set was considered by the fuzzy entropy. The relation between the similarity measure and the entropy for fuzzy set was also analyzed. The fuzzy entropy was reformulated as the dissimilarity measure. Furthermore, crisp set having the minimum uncertainty with respect to the corresponding fuzzy set was also proposed. Finally, derivation of a similarity measure from entropy with the help of total information property was derived. A simple example shows the relation between similarity measure and fuzzy entropy, in which the summation of similarity measure and fuzzy entropy represents a constant value.

Key words: similarity measure; fuzzy entropy; minimum uncertainty; quantitative comparison

1 Introduction

To obtain the results on data mining, pattern classification or clustering, it was essential to possess the analysis of data certainty and uncertainty. In order to analyze the data, a data set was often considered as a fuzzy set with membership function. Hence, fuzzy entropy and similarity analyses had been emphasized for studying the uncertainty and certainty information of fuzzy sets [1-5].

The entropy of a fuzzy set was called as the measure of its fuzziness by previous researchers, which was designed based on the distance measure such as Hamming distance [6-7]. Similarity measure was also considered as the dual concept of fuzzy entropy or distance measure [5]. Relation between similarity measure and fuzzy entropy or dissimilarity measure was discussed in previous literatures [8]. The degree of similarity between two or more data sets could be used in the fields of decision making, pattern classification, etc [9-11]. Numerous researchers have carried out research on deriving similarity measures [12-14]. Especially, similarity measures based on the distance measure were applicable to general fuzzy membership functions, including non convex fuzzy membership functions [15].

Two measures, entropy and similarity, represented the uncertainty and similarity with respect to the corresponding crisp set, respectively. Hence, it was interesting to study the relation between entropy and similarity measure for data set. LIU [5] also proposed a relation between distance and similarity measures, and the sum of distance and similarity constituted the total information.

In this work, the relationship between the entropy and similarity measures for fuzzy sets was analyzed, and the quantitative amount of corresponding measures was computed. First, fuzzy entropy and similarity measures were derived by the distance measure. Discussion with entropy and similarity for data was then followed. With the proposed fuzzy entropy and similarity measure, the property that the total information comprised the similarity measure and entropy measure was verified. Example helped to understand total information property between fuzzy entropy and similarity as the uncertainty and certainty measure of data.

2 Preliminaries on fuzzy entropy and similarity measure

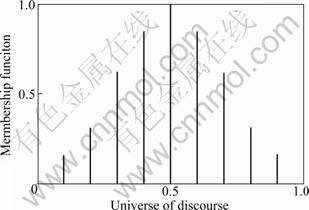

Every data had uncertainty in its data group, and it was illustrated as the membership function for fuzzy set. Data uncertainties could be evaluated by fuzzy entropy, and explicit fuzzy entropy construction was proposed by numerous researchers [5, 7, 15]. Fuzzy entropy of fuzzy set means that fuzzy set contains how much uncertainty with respect to the corresponding crisp set. Then, what is the data certainty with respect to the deterministic data? This data certainty could be obtained through similarity measure. First, the relation between fuzzy entropy and similarity measure was represented by the quantitative comparison. If there were two comparative sets, then one was a fuzzy set and the other was the corresponding crisp set. Consider the pair illustrated in Fig.1, where crisp set Anear represents the crisp set “near” to the fuzzy set A.

Fig.1 Membership functions of fuzzy set A and crisp set Anear=A0.5

Anear could be assigned by various variables. For example, the membership value of crisp set A0.5 is one when  and is zero otherwise. Here, Afar is the complement of Anear, i.e.,

and is zero otherwise. Here, Afar is the complement of Anear, i.e.,  In the previous result, the fuzzy entropy of fuzzy set A with respect to Anear is represented as [6]

In the previous result, the fuzzy entropy of fuzzy set A with respect to Anear is represented as [6]

(1)

(1)

where  is

is

satisfied.  and A

and A Anear are the minimum and maximum values between A and Anear, respectively. [0]X and [1]X are the fuzzy sets in which the value of the membership functions are zero and one for the universe of discourse, respectively. d satisfies Hamming distance measure. Equation (1) is not the normal fuzzy entropy. The normal fuzzy entropy could be obtained by multiplying the right-hand side of Eq.(1) by two, in which the maximal fuzzy entropy is one. Numerous fuzzy entropies could be also presented by the fuzzy entropy definition [5]. Here, the same result of Eq.(1) is followed as

Anear are the minimum and maximum values between A and Anear, respectively. [0]X and [1]X are the fuzzy sets in which the value of the membership functions are zero and one for the universe of discourse, respectively. d satisfies Hamming distance measure. Equation (1) is not the normal fuzzy entropy. The normal fuzzy entropy could be obtained by multiplying the right-hand side of Eq.(1) by two, in which the maximal fuzzy entropy is one. Numerous fuzzy entropies could be also presented by the fuzzy entropy definition [5]. Here, the same result of Eq.(1) is followed as

(2)

(2)

The fuzzy entropies Eqs.(1) and (2) are satisfied for all values of crisp set Anear.

2.1 Method of least uncertainty

For ordinary Anear, it is interesting to decide which crisp set is the nearest to fuzzy set A. It is denoted that the least uncertainty represents the most similar ordinary data set with respect to the fuzzy data. From fuzzy entropies of Eqs.(1) and (2), entropy value is proportional to the area of difference between A and Anear. Hence, for a A0,X, Eqs.(1) and (2) should be rewritten as [16]

(3)

(3)

Let  e(A, Anear) is shown to be

e(A, Anear) is shown to be

The maxima or minima are obtained by differentiation:

Hence, it is clear that the point x satisfying

Anear)=0 is the critical point for the crisp set. This point is given by mA(x)=1/2, i.e., Anear=A0.5. The fuzzy entropy between A and A0.5 has the minimum value because e(A, A0,0) or e(A, Anear) attains the maximum when the corresponding crisp set is A0,0 or

This result is shown by heuristic point of view. By analysis, it is also shown that

≤

≤

Similarly, e(A, A0.5)= is also satisfied.

is also satisfied.

Generally, the second derivative with respect to x should be

where  is positive for the general

is positive for the general

fuzzy membership function within x≤xmax. For symmetric fuzzy membership function, it is natural that

e(A, A0.5)=e(A, A0, x) (4)

Hence, for a convex and symmetric fuzzy set, the minimum entropy of the fuzzy set is equal to that of the crisp set A0.5. This indicates that the corresponding crisp set has the least uncertainty or the greatest similarity when the fuzzy set satisfies A0.5.

2.2 Relation between similarity and certainty

All the studies on similarity measures deal with derivations of similarity measures and applications to computation of the degree of similarity. LIU [5] also proposed an axiomatic definition of the similarity measure. The similarity measure for  and

and  has the following four properties:

has the following four properties:

(S1)  ,

,

(S2)

(S3)  ,

,

(S4)  if

if then s(A, B)≥ s(A, C) and s(B,C)≥s(A, C)

then s(A, B)≥ s(A, C) and s(B,C)≥s(A, C)

where F(X) denotes a fuzzy set, and P(X) is a crisp set.

In the previous studies, similarity measures between two arbitrary fuzzy sets are proposed as follows [12]:

For any two fuzzy sets

(5)

(5)

is the similarity measure between set A and set B.

The proposed similarity measure between A and Anear is presented, and the usefulness is also verified.

Theorem 2.1:  and the crisp set Anear in Fig.1,

and the crisp set Anear in Fig.1,

(6)

(6)

is a similarity measure.

Proof: (S1) follows Eq.(6). For crisp set D, it is clear that s(D, Dc)=0. Hence, (S2) is satisfied. (S3) indicates that the similarity measure of two identical fuzzy sets s(C, C) attains the maximum value among various similarity measures for different fuzzy sets A and B.

represents the entire region of Fig.1.

represents the entire region of Fig.1.

Finally, from

≥

≥

and

≥

≥ ,

,

A A1,near

A1,near A2,near.

A2,near.

It follows that

Similarly, s(A1,near, A2,near)≥s(A, A2,near) is satisfied by the inclusion properties:

≥

≥

and

≥

≥

The similarity Eq.(6) represents the areas shared by two membership functions. In Eq.(5), fuzzy set B could be replaced by Anear. In addition to Eq.(5), numerous similarity measures satisfying definition of a similarity measure could be derived. From Fig.1, total area represents one (universe of discourse × maximum membership value=1×1=1), and it illustrates the total amount of information. Hence, the total information comprises the similarity measure and entropy measure:

s(A, Anear)+e(A, Anear)=1 (7)

With the similarity measure Eq.(5) and the total information expression Eq.(7), the following proposition is followed.

Proposition 2.1: In Eq.(7), e(A, Anear) follows the similarity measure Eq.(5):

e(A, Anear)=1-s(A, Anear)=

The above fuzzy entropy is identical to that of Eq.(1). The property given by Eq.(7) is also formulated as follows.

Theorem 2.2: The total information about fuzzy set A and the corresponding crisp set Anear,

s(A, Anear)+e(A, Anear)=

satisfies one.

Proof: It is clear that the sum of the similarity measure and fuzzy entropy equals one, which is the total area of Fig.1. Furthermore, it is also satisfied by computation:

and

.

.

Hence, s(A, Anear)+e(A, Anear)=1+1-1=1 is followed.

Now, it is clear that the total information about fuzzy set A comprises similarity and entropy measures with respect to the corresponding crisp set Anear. By the following proposition, meaning of similarity measure and fuzzy entropy can be summarized by heuristic point of view.

Proposition 2.2: Similarity measure s(A, Anear) and fuzzy entropy e(A, Anear) also denote the certainty and uncertainty between fuzzy set A and ordinary set Anear.

3 Relation between similar measure and fuzzy entropy

With the property of Theorem 2.2, similarity measure derivation with entropy is carried out. Entropy derivation from similarity is described. This conversion makes it possible to measure formulation from complementary measure. With the consideration of previous similarity measure Eq.(5), fuzzy entropy Eq.(1) is obtained. It is also possible to obtain another similarity measure using fuzzy entropy Eq.(2). The proposed fuzzy entropy is developed by using the Hamming distances between a fuzzy set and the corresponding crisp set.

3.1 Similarity measure derivation

The following result could be also obtained from Fig.1. Equation (2) represents the difference between A and the corresponding crisp set Anear. From Theorem 2.2, the following similarity measure satisfies

(8)

(8)

Here, it is interesting to determine whether Eq.(8) satisfies the conditions for a similarity measure.

Proof: (S1) follows Eq.(8). Furthermore,  is zero because

is zero because  satisfies

satisfies  Hence, (S2) is satisfied. (S3) is also satisfied since

Hence, (S2) is satisfied. (S3) is also satisfied since

hence, it follows that s(C, C) is a maximum. Finally,

hence, it follows that s(C, C) is a maximum. Finally,

because

and

are satisfied for  The inequality s(B, C)≥s(A, C) is also satisfied in a similar manner.

The inequality s(B, C)≥s(A, C) is also satisfied in a similar manner.

Similarity derivation from fuzzy entropy has the same structure designed from similarity definition. Following corollary insists that two similarity measures have the same structure even though they are derived from different ways.

Corollary 3.1: Similarity measures Eqs.(6) and (8) are equal.

Corollary 3.1 means that the following equality is satisfied:

It becomes

This equality can be verified easily by analyzing Fig.1, or analytical proof is also obtained.

which means the area of fuzzy set A.

d(A, [1]X)

because  is satisfied at every

is satisfied at every

With the previous result, the minimum fuzzy entropy could be obtained when the entropy between the fuzzy sets A and A0.5 is considered. Furthermore, it is also obvious that the maximum similarity is satisfied as Eq.(10):

(9)

(9)

(10)

(10)

3.2 Illustrative example

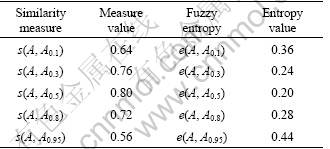

By the computation between similarity measure and fuzzy entropy, the relation between two measures is explained. Heuristic assumption makes it possible that values of fuzzy entropy should be reversely proportional to those of similarity measure. Next, fictitious discrete fuzzy set with membership function, A={x, mA(x)}, is considered:

A={(0.1, 0.2), (0.2, 0.4), (0.3, 0.7), (0.4, 0.9), (0.5, 1),

(0.6, 0.9), (0.7, 0.7), (0.8, 0.4), (0.9, 0.2), (1, 0)}

Fuzzy set A is illustrated in Fig.2. The fuzzy entropy and similarity measures are calculated using Eqs.(2) and (8), in which variable “near” is varied from 0.1 to 0.95. The calculation results indicate that the total information is composed of data certainty and uncertainty with respect to the ordinary data set.

Fig.2 Membership functions of fuzzy set A={x, μA(x)}

Table 1 lists the calculation result of s(A, Anear) and e(A, Anear). The maximum similarity measure for s(A, A0.5) is calculated with the following equation:

s(A, A0.5)=1-1/10(0.2+0.4+0.4+0.2)-

1/10(0.3+0.1+0.1+0.3)=0.8

Table 1 Similarity and entropy value between fuzzy set and corresponding crisp set

Fuzzy entropy e(A, A0.5) is also calculated by

e(A, A0.5)=1/10(0.2+0.4+0.4+0.2)+

1/10(0.3+0.1+0.1+0.3)=0.2

The remaining similarity measures and fuzzy entropies are calculated in a similar manner.

4 Conclusions

1) Quantification of fuzzy entropy and similarity for fuzzy sets were studied. Fuzzy entropies for fuzzy sets were developed by considering the crisp set “near” the fuzzy set. The minimum entropy can be obtained by calculating area, and it is satisfied when the crisp set is Anear=A0.5, and the verification is also followed. The minimum fuzzy entropy corresponds to the maximum similarity measure value.

2) The similarity measure between the fuzzy set and the corresponding crisp set was derived with the fuzzy entropy, and vise versa. In the heuristic point of view, similarity measure and fuzzy entropy obtained are also represented by the certainty and uncertainty with respect to the corresponding crisp data.

3) With fictitious fuzzy data, the calculation of similarity measure and fuzzy entropy was carried out. The property that the sum of fuzzy entropy and the similarity measure between fuzzy set and corresponding crisp set is verified as a constant value. It is also proved that the fuzzy entropy and similarity measure values constitute whole area of information.

References

[1] EULALIA S, JANUSZ K. Entropy for intuitionistic fuzzy sets [J]. Fuzzy Sets and Systems, 2001, 118: 467-477.

[2] DE LUCA A, TERMINI S. A definition of nonprobablistic entropy in the setting of fuzzy sets theory [J]. Information and Control, 1972, 20(3): 301-312.

[3] BHANDARI D, PAL N R. Some new information measure of fuzzy sets [J]. Information Science, 1993, 67: 209-228.

[4] GHOSH A. Use of fuzziness measure in layered networks for object extraction: A generalization [J]. Fuzzy Sets and Systems, 1995, 72: 331-348.

[5] Liu Xue-cheng. Entropy, distance measure and similarity measure of fuzzy sets and their relations [J]. Fuzzy Sets and Systems, 1992, 52: 305-318.

[6] FAN J L, MA Y L, XIE W X. On some properties of distance measures [J]. Fuzzy Sets and Systems, 2001, 117: 355-361.

[7] FAN J L, XIE W X. Distance measure and induced fuzzy entropy [J]. Fuzzy Sets and Systems, 1999, 104: 305-314.

[8] LEE S H, PEDRYCZ W, SOHN G Y.Design of similarity and dissimilarity measures for fuzzy sets on the basis of distance measure [J]. International Journal of Fuzzy Systems, 2009, 11(2): 67-72.

[9] Rébillé Y. Decision making over necessity measures through the Choquet integral criterion [J]. Fuzzy Sets and Systems, 2006, 157: 3025-3039.

[10] Kang W S, Choi J Y. Domain density description for multiclass pattern classification with reduced computational load [J]. Pattern Recognition, 2008, 41(6): 1997-2009.

[11] Shih F Y, Zhang K. A distance-based separator representation for pattern classification [J]. Image and Vision Computing, 2008, 26(5): 667-672.

[12] Lee S H, Kim J M, Choi Y K. Similarity measure construction using fuzzy entropy and distance measure [J]. Lecture Notes in Artificial Intelligence, 2006, 4114: 952-958.

[13] Chen Shi-ming. New methods for subjective mental workload assessment and fuzzy risk analysis [J]. Cybern Syst, 1996, 27(5): 449-472.

[14] Chen S J, Chen S M. Fuzzy risk analysis based on similarity measures of generalized fuzzy numbers [J]. IEEE Trans on Fuzzy Systems, 2003, 11(1): 45-56.

[15] LEE S H, LEE S M, SOHN G Y, KIM J H. Fuzzy entropy design for non convex fuzzy set and application to mutual information [J]. Journal of Central South University of Technology, 2011 18: 184-189.

[16] LEE S H, RYU K H, SOHN G Y. Study on entropy and similarity measure for fuzzy set [J]. IEICE Trans Inf & Syst, 2009, E92-D(9): 1783-1786.

(Edited by YANG Bing)

Foundation item: Project(2010-0020163) supported by Priority Research Centers Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education, Science and Technology, Korea

Received date: 2010-02-15; Accepted date: 2011-04-28

Corresponding author: LEE Sang-Hyuk, PhD; Tel: +82-512-88161415; E-mail: leehyuk@inha.ac.kr