BP algorithm of Hessian matrix based on

fuzzy optimization model and its application

GUO Yu(郭瑜)1, CHEN Shou-yu(陈守煜)2

(1. Pearl River Hydraulic Research Institute, Ministry of Water Resources, Guangzhou 510611, China;

2. School of Civil Engineering and Architecture, Dalian University of Technology, Dalian 116024, China)

Abstract: On the basis of fuzzy optimization model, the concept of equivalent error function is introduced to establish a new back propagation (BP) algorithm of Hessian matrix to promote the calculation. The Newton’s iteration method is applied to promote the training efficiency and accelerate the convergence. This method is used in the decision-making analysis for economic, water resources and environmental planning of the Dalian City.

Key words: fuzzy optimization model; neural networks; error function; Hessian matrix; BP algorithm

CLC number: TP273 Document code: A Article ID: 1672-7207(2011)S1-0008-04

1 Introduction

In 1990, Chen et al[1] presented multi-objective fuzzy optimal selection theory; then in 1997 Chen[2] developed the theory and established multi-objective decision-making model with fuzzy optimization model. Based on correlation between fuzzy optimization theory and neural networks that the model constructs reasonable topological structure, viz. input layer, hidden layer, output layer and nodes, and the model takes fuzzy optimal selection mode as stimulation function[3].

Current neural networks generally select Sigmoid functions as stimulation functions, yet there have not physical sense and completely are black-box operation. However, stimulation functions of fuzzy optimization explicit physical signification, i.e., fuzzy optimization. Commonly, these algorithms are all founded upon gradient calculation and posses limitation of slow convergence[4], according to Refs. [5-6], the authors in the paper try to present a BP algorithm of Hessian matrix with fuzzy optimization, then apply the algorithm to plan sustainable development of water resources for the Dalian City. The results show the algorithm has faster convergent speed than conventional gradient calculation.

2 BP Fuzzy optimal selection

Topological structure of fuzzy optimization is composed of input layer, one or more hidden layers and an output layer[2]. According to the structure and relative membership of the decision-making system for multi-layered fuzzy optimization model, a neural network system is established. Here a 3-layered network is taken as an example to show relationships between input and output. In the neural network system, we select the total number of objectives m of fuzzy optimization model as input layer’s serial code and use relative membership degree (RMD) rij as input data. The information transfers directly to the hidden nodes, so at input layer the input data should be equal to output,

(1)

(1)

At the hidden layer, the nodes of input and output are corresponded to the input and output units of fuzzy optimization decision-making system, therefore input data of node k is

(2)

(2)

where wik is link-weight between nodes i and k, and it satisfies normalized conditions  ,

,  ; the outputs of hidden layer adopt fuzzy optimal equation is:

; the outputs of hidden layer adopt fuzzy optimal equation is:

(3)

(3)

At the output layer, number of nodes corresponds to the highest layer of fuzzy optimal system, and output information shows that RMD of comprehensive is an evaluating index of schemes, so the output layer only has one node p. Its input data is

(4)

(4)

where L is the node number of the hidden layer, wkp is a link-weight between the hidden node k and the output node p, and it satisfies  ,

,  ; whose output data is

; whose output data is

(5)

(5)

Obviously, the output upj of network just responds to the input rij of fuzzy optimization neural network system.

Assume that the optional schemes are n, RMD of comprehensive evaluating indexes of jth scheme is upj, then we conclude that the objective function for training weights of fuzzy optimal neural network is

(6)

(6)

The learning objective of neural network system is to get a minimum of possible errors E with a steady state. Chen et al[2-3] ever offered weight training algorithms of fuzzy optimization neural networks, but these algorithms all took gradient methods as their foundation. In this paper the author presents a BP algorithm of Hessian matrix of error function.

3 BP learning algorithm of Hessian matrix for fuzzy optimal neural networks

To simplify the calculation, we introduce the concept of equivalent error function and based on it, we set up BP algorithm of Hessian matrix. From Eqs. (5) and (6) we can conclude

(7)

(7)

We use Fj to express the latter part of Eq. (7):

(8)

(8)

Then Eq. (7) can be turned into

(9)

(9)

Generally Ipj is a simple multinomial of weights, so Fj is a quartic multinomial of weights and Ej is a fractional multinomial of weights and they can be marked as

,

,

Here we offer a proof to illustrate that E(w) and F(w) have the same zero data or root. From 0≤Ipj≤1, we can

easily get  ≤

≤ ≤1, then from Eq. (9) we can conclude:

≤1, then from Eq. (9) we can conclude:

≤

≤ ≤

≤

Consequently, we will get:

F(w)≤E(w)≤4F(w) (10)

Assume that w0 is a random zero data of E(w), i.e. E(w0)=0, from inequality (10) we know that 0≤F(w0)≤E(w0)=0, so F(w0)=0, that is to say, w0 is also zero data of F(w); similarly, we can testify that random zero data of F(w) also is zero data of E(w). It is obvious that E(w) and F(w) have the same zero data, yet E(w) is a fractional multinomial and F(w) is a quartic multinomial, here they have the same zero data, then we define F(w) is an equivalent error function of E(w). We just calculate that zero data of F(w) when we need to calculate zero of E(w). Obviously the calculation process of F(w) is more convenient than E(w) in an optimization calculation.

To adjust weights w to get a minimum value of the equivalent error function F(w), we first calculate grads of F(w). Then the partial derivation of F(w) can be written as

(11)

(11)

Therefore we will get the descent gradient equation for adjusting weights as follows:

(12)

(12)

Here η refers to learning efficiency and t to number of learning activities. This equation is just a general descent gradient equation.

Since gradient algorithm has limitations of slow convergence that we need accelerate convergent speed, according to Newton iteration algorithm[5], we must calculate the second derivation of error function. From Eq. (11) we have

≡

≡ (13)

(13)

(14)

(14)

After substituting Eq. (14) into Eq. (13) and we will get

(15)

(15)

Consequently we can get an equivalent error function of Hessian matrix:

where  .

.

According to Newton iteration algorithm, we can conclude the iteration equation as follows:

(16)

(16)

where η refers to learning efficiency and t stands for number of learning activities, H is Hessian matrix of error function F(w) and H-1 is inverse matrix. Eq. (16) is defined as adjusting weights equation of Hessian matrix.

Commonly, for avoiding fluctuant affect, we often add impulse factor in the latter part of Eq. (16), then the iteration equation turns into

(17)

(17)

Here  stands for impulse factor.

stands for impulse factor.

4 Application

During the study on management mode of synthetic water resources utilization and economy harmonization of the Dalian City, we need to conduct decision-making schemes selection among level of years 2000 and 2010 of Dalian. Here we will offer process and results of scheme selection at the level of year 2000 in the southern part of Jinzhou.

We can take output indexes maximum of decision system as our target, and divide the whole system into water resources sub-system, economy sub-system and environment sub-system. As hidden layer of model, input indexes of subsystems compose of input layer system, i.e., water resources sub-system (includes ratio of available supply to total need amount of water R1, ratio of major industry to whole industry water consuming R2, ratio of indispensable industry to whole industry water consuming R3), economy sub-system (includes ratio of main industry to whole industry production R4, ratio of indispensable industry to whole industry production R5), environment sub-system that include grade that alternative influence environment as input index R6.

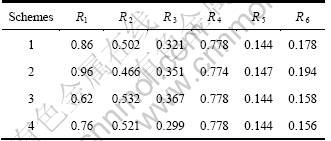

Assume that indexes weights of water resources subsystem are (w11, w12, w13), indexes weights of economy subsystem are (w21, w22), and index weight of environment subsystem is w31; in whole system, weights of subsystems are (w1, w2, w3). Input indexes data of level of year 2000 at the southern part of Jinzhou are listed in Table 1.

Table 1 Input indexes data of level of year 2000 at the southern part of Jinzhou

Using fuzzy optimal binary parallel model[7], we can determine initial data of indexes in model:

(w1, w2, w3)=(0.4, 0.3, 0.3),

(w11, w12, w13)=(0.50, 0.35, 0.15),

(w21, w22)=(0.75, 0.25), w31=(1.0)

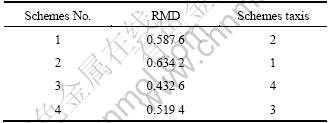

According to the fuzzy optimization Eq. (3), we calculate RMD of schemes normally and get RMD results of level of year 2000 at the southern part of Jinzhou as shown in Table 2.

Table 2 RMD of level of year 2000 at the southern part of Jinzhou

From Table 2 we can see that, first one of schemes taxis is scheme 2 and after consulting experts we know that the industrial water consuming ration of scheme 1 is more reasonable. Then according to the intelligent decision method presented by Chen[8], we can determine whether scheme 1 can be lined first or not. Therefore, we exchange RMD of scheme 1 with scheme 2 and obtain new RMD objective vector of schemes as u=(0.634 2, 0.586 7, 0.432 6, 0.519 4).

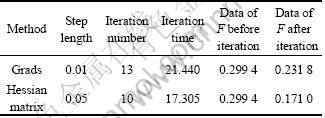

According to equivalent error function of fuzzy optimization presented in this paper, we operate learning training of these two algorithms and get results as shown in Table 3.

Table 3 Results comparison of learning training

Consequently, adjusting results of weights by using grads method are

(0.495 7, 0.389 5, 0.305 1; 0.558 9, 0.386 9, 0.173 9; 0.800 8, 0.258 5; 1.0)

Adjusting results of weights by using Hessian matrix method are

(0.498 5, 0.389 7, 0.305 4; 0.562 6, 0.385 6, 0.172 0; 0.801 0, 0.257 3; 1.0)

The results show that adjusting weights of schemes still satisfy demand, that is to say, scheme 1 can be adopted as first one of schemes taxis.

5 Conclusions

(1) Through analysis of fuzzy optimization model, the paper presents a concept of equivalent error function and simplifies the calculation for fuzzy optimization models.

(2) The paper also puts forward a BP algorithm of Hessian matrix based on Newton iteration method and error function. The method has faster convergent speed than traditional BP gradient algorithm, and application shows that the method is effective.

Reference

[1] Chen S Y, Zhao Y Q. Theory and model of fuzzy optimization[J]. Fuzzy System and Mathematics, 1990(2): 87-91.

[2] Chen S Y. Multi-objective decision making theory of fuzzy optimal neural networks[J]. Journal of Dalian University of Technology, 1997, 37(6): 693-697. (in Chinese)

[3] Chen S Y. Theory and application of engineering fuzzy sets[M]. Beijing: National Defense Industrial Press, 1998: 45-47. (in Chinese)

[4] Tang H W. Practical optimal methods[M]. Dalian: Dalian University of Technology Press, 2000: 65-67. (in Chinese)

[5] Chi Y H. A BP algorithm based binary derivation[J]. Journal of Huazhong University of Technology, 1998, 26(3): 105-107. (in Chinese)

[6] Lin C S. Numerical calculating methods[M]. Beijing: Science Press, 1998: 50-56. (in Chinese)

[7] Chen S Y. The fuzzy sets theory and practice for engineering hydrology and water resources system[M]. Dalian: Dalian University of Technology Press, 1998: 120-135. (in Chinese)

[8] Chen S Y. Fuzzy recognition theory and application for complex water resources system optimization[M]. Changchun: Jilin University Press, 2002: 93-95. (in Chinese)

(Edited by CHEN Can-hua)

Received date: 2011-04-15; Accepted date: 2011-06-15

Corresponding author: GUO Yu; Tel: +86-13710198261; Email: gy_mail@sohu.com